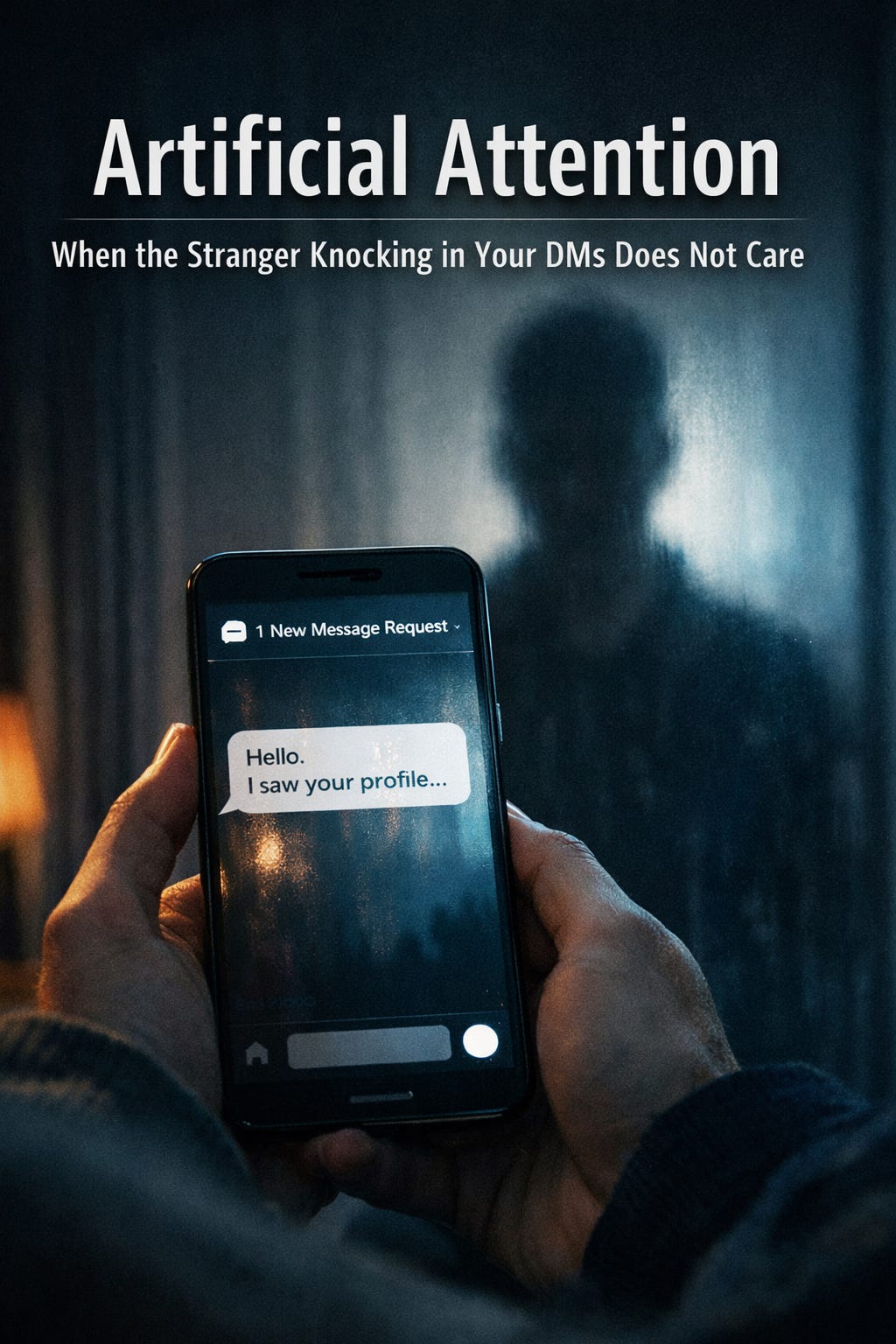

Artificial Attention

When the Stranger Knocking in Your DMs Does Not Care

A few months ago, I wrote about how I almost fell for a Facebook Marketplace scam where the person behind the seller wanted me to send money through an app before I came to pick up the dresser I was trying to buy. I had been deep enough into the negotiations that I felt like I was getting exactly the item I wanted at a pretty fair price. I had bargained a bit lower, and everything seemed normal until the moment the seller would not give me the address unless I paid ahead.

Ummmm…no.

That was the moment the whole thing collapsed. I blocked the account, reported it, and moved on. At the time, it felt like a small reminder to stay alert online. Marketplace scams are common enough now that most people have heard about them, and most people can name the general rule of thumb…if someone is demanding money before you have even seen the item, you are being played.

However, that was not the end of my little social-media scam safari. I’m sure some of these have happened to you, too. If you are on Facebook long enough, you start noticing patterns. About once a month I get a friend request from someone who is already my friend. Those are easy to spot, and they are usually easy to stop. Someone copies a profile photo, duplicates a name, and sends requests to the original person’s friends list hoping people will accept without thinking too hard. You hit report, flag it as impersonation, and usually Meta locks down the fake profile pretty quickly. It is annoying, but it is also familiar.

The newer ones are different. Over the past year I have started seeing profiles that appear to be generated almost entirely by artificial intelligence. The profile photos look real at first glance. The bios read like ordinary people: lives in Kansas, played clarinet in high school, went to college for nursing, has two kids, owns a Camry, has an orange cat. The posts are generic but believable. These accounts show up everywhere now…Facebook, Instagram, Threads, BlueSky, and yes, even Substack.

The conversation patterns I have had with these fake profiles have stayed oddly consistent, which is part of what makes them recognizable. For years the classic scam introduction has been some variation of flattery, usually aimed at women I’ve learned and usually delivered with a vibe that feels like a romance novel written by someone who has never actually met a woman in real life. “You have a very pretty smile.” “You are beautiful.” “You seem like such a kind person.” From there, the accounts ask vague questions about my life. They want to know where I live, what I do for work, whether I have kids, whether I am happily married, whether I are lonely. A person can almost feel the checklist behind the questions. In the past, the scripts were clunky enough that if I nudged the conversation off the rails, it would break quickly. I noticed the responses would repeat and the conversation would become cyclical like someone trying to sound interested, but watching Netflix at the same time. The scenario always escalated quickly with the person would suddenly calling me “my queen” like I had been in a relationship for years instead of five minutes.

I developed a quick way to end those conversations, mostly for my own amusement and partly as a test, and partly because I don’t have time for people’s stupid nonsense on line. It would go something like this:

Them: Hey there. You have such a great smile.

Me: Thanks. I’m broke right now, and I wonder if you could give me $1000. You can pay me via Venmo.

The results were always impressive in their predictability. The minute I signaled that I was not a profitable target, the “friendship” evaporated. Imagine that.

Recently, though, the tactics have shifted in a way that feels more sophisticated and also more unsettling. The opening line may still be flirtatious. “I love your eyes, is that too forward?” or “You look so beautiful in your photos.” The difference is what comes next. Instead of staying vague, the account references specific things from my profile. My teaching. My gardening. Posts I have made recently. Sometimes even the dogs. It looks like the person has been paying attention, and that is exactly what makes it dangerous, because attention can feel like care when you are tired, lonely, grieving, or simply human.

The more I interacted with these accounts (mostly out of curiosity, and yes, I always lie about everything), the more I noticed something about the texture of the conversation. It was smoother than the old scripts, but it was also hollow in a particular way. The account would comment on what it could see, what it could scrape, what it could mirror back. It would rarely take the kind of conversational risks that real people take, the small moments of uncertainty or specificity that make human interaction feel grounded. This week it started like this:

Them: Hi. I saw you like dogs. I have three.

Me: Yes. I have a dog. Can you guess her breed?

Them: I see she is a pretty black dog.

There was no guessing, no admission of not knowing, no curiosity. It was like talking to something that could recognize a shape but could not interpret meaning, as if I asked what does Charlie Brown’s sweater look like and it tells me that it’s yellow with a black zig zag…Duh.

That is the part that started connecting in my brain to work I have spent decades thinking about and studying how people, especially young people, make decisions. In human agency models, the person is not simply a passive creature being pushed around by whatever is happening in their environment. Humans have the proactive ability to form goals, plan steps, regulate behavior, and evaluate outcomes. People are both creators and products of their environments, which is a fancy way of saying that we shape the world and the world shapes us, and we are always in that loop, whether we realize it or not.

What has struck me in these scam interactions is that the system can mimic the appearance of human intentionality because it is designed for an outcome. It is trying to get engagement, establish rapport, and eventually move toward money, information, or access. The system often gives the impression that it is “paying attention,” because it can reference details from your profile. It can mirror your interests. It can respond quickly. It can keep the conversation going.

However, there are breakdowns in the last three phases of agency, and those breakdowns are often what create that uncanny feeling in the pit of your stomach that says “WARNING, Will Robinson.” Forethought in these interactions tends to be shallow. The conversation is guided by what typically works with most people. It is built around majority responses, which means that outliers, direct defiance, or unexpected questions can cause the interaction to become evasive or strangely generic. The action regulation can look competent, because the system can persist, redirect, and keep trying. Yet there is no genuine self-reflection. There is no internal evaluation of “am I making sense” or “did that land” or “is my claim plausible.” There is no sense of peer efficacy, moral coherence, or truthfulness. There is language, probability, and strategy, without the human capacity for internal judgment about the soundness of the self.

That difference matters because these scams are not merely technical. They are relational. They succeed by exploiting the very human processes of trust, belonging, and meaning-making, and that brings me to the story that has stayed with me the most, because it is the one that hurt someone I love. I got permission to share her story, but I’m going to change her name because…you know…the Internet.

A close friend of mine, who we will call Amelia, lost her husband to cancer over ten years ago. They were college sweethearts, the kind of couple who have so many shared stories that their memories start to braid together. On his birthday, their anniversary, and the date of his death, she posts photos of him. Sometimes they are wedding photos. Sometimes they are silly snapshots. Often they are pictures from their younger years, because when you lose someone too early, you tend to return to the places where they still look alive in your mind.

Amelia received a DM on one of her social media accounts from what looked like a real person’s account set to private. On Meta platforms, you can message someone without them necessarily seeing your account details the way they would with a public profile. That privacy feature is useful in some contexts, particularly for Marketplace, but it also creates a strange environment where someone can appear in your inbox without you having an easy way to inspect who they are.

This “man” told Amelia how sorry he was about her husband’s passing. He said that he and her husband had been high school friends, but they lost contact when he moved to another state. Even that detail has a specific emotional pull, because high school is a part of life most spouses only partially know. It is the chapter before you enter the story, the part that belongs to parents, siblings, and old friends. When someone claims access to that chapter, it can feel like a gift.

Then he offered to send her a photo.

It was a picture of a young version of her husband, somewhere between sixteen and eighteen maybe, standing beside him. It looked like the kind of candid photo that lives in old albums or gets scanned and posted on anniversaries. The smile was familiar. The posture was familiar. It even had that weird aged vibe that images from the early 1980s can have. For Amelia, it felt like someone had handed her a little time capsule, a glimpse of her husband as a boy, living a life that existed before she ever loved him.

Amelia talked with this man for two weeks.

For two weeks she believed she was talking to someone who had known her husband well. The “friend” told stories about skipping school to go fishing. He said they were in the same English class, and he joked about cheating off her husband’s homework. He described a situation where her husband stood up for him, the kind of everyday moral moment that becomes meaningful after someone dies, because it reinforces the sense that the person you loved really was who you believed them to be.

There were details that sounded ordinary, and that is exactly why they felt true. Real relationships are built out of ordinary moments. Most people do not tell grand stories about their friendships. They tell small ones. They talk about the time they got in trouble, the time they laughed so hard they cried, the time someone defended them, the time they felt understood.

Amelia was not only listening. She was receiving love from her husband beyond the grave. The stories gave her a version of her husband she could hold, a teenager she could imagine, a friend she could picture walking through a hallway. Grief does that. Grief gathers scraps of memory and makes a quilt out of them because the alternative is an empty bed and a silent house and days that still contain anniversaries even after the person is gone.

Eventually, something felt off for her. She needed something more. Amelia asked a question that made sense if you thought she believed the story was true. She asked if he had any other photos of her husband. “Of course. I have several.”

He sent her back a photo…it was from her own profile.

That was the crack in the illusion. It was the moment the narrative could not sustain itself. The man who claimed to have known her husband in high school, who claimed to have shared memories and experiences and classrooms and fishing trips, could not produce anything outside the digital material Amelia herself had already posted. When she pushed for new evidence, the system circled back to what it could scrape.

Amelia doesn’t know if was a real person or a very clever AI, but she believes the original photo he sent was AI-generated from another picture already on her account. She noticed that her husband’s pose was almost the same, and the smile looked exact. That detail really pisses me off, because there is something uniquely cruel about using the face of a dead person as raw material. It is not just deception. It is a kind of theft, not of money or time, but of someone’s autobiography.

Amelia blocked him immediately, which is what any of us would advise. The technical problem ended right there. The account disappeared. The messages stopped.

However, the human problem did not stop. For Amelia, the damage was done. The stories had opened grief all over again, and now she had to grieve two things at once. She had to grieve the original loss, the reality that her husband was still gone. She also had to grieve the fact that she had been pulled into a fictional past and had believed it. She had to replay conversations in her head. She had to hold the embarrassment and anger that often follows being deceived, even when you did nothing wrong except respond like a normal human being to what appeared to be compassion.

That is what these scams do when they are at their worst. They do not just take money. They take emotional energy. They take trust. They take memory and twist it into a tool.

I have thought about Amelia’s story a lot, and I have thought about it even more today, because another yet another fake account reached out to me on Instagram. It was the same storyline I have seen over and over. A widower. A private profile. The familiar compliments. The conversational openings that are just personal enough to feel plausible and just generic enough to apply to a thousand women in a thousand inboxes.

I peeked at profile when it pinged my DM, and it had about sixty “friends.” I reported it immediately. It took longer than I expected for Meta to shut it down, and in that time I watched the number climb. By the time the account disappeared today, there were over three hundred.

Three hundred people might not give us pause. In a world where celebrities carry millions of followers, three hundred sounds benign. Still, surely some people like me reported it and blocked it right away. Some people probably got curious and poked the conversation for a while (and yes, I understand the temptation because I do it sometimes too). However, three hundred real people might have answered sincerely. Those people might have believed they are talking to a real person. Those people might have shared pieces of their lives because someone appeared to be listening, and listening is a powerful drug when you have not been heard in a long time. However, attention is not the same thing as care.

When we talk about problems like this, the solution we tend to reach for is regulation. We want legislators to make laws about regulating tech companies. We want safety guardrails on AI that are impenetrable and cannot be broken. Those things matter. They probably need to happen. Even so, the longer I live in this world, the more I accept that no technical safeguard is ever permanent. Systems are going to evolve. Scammers will adapt. Every gate eventually gets tested by someone determined to climb over it.

The words that keep coming back to me are not from a policy paper. They are from Jesus. In Matthew 25, Jesus tells his followers that caring for the vulnerable is not optional. “Whatever you did for one of the least of these brothers and sisters of mine, you did for me.” The people who are most targeted by these scams are the people who are most vulnerable: widows and divorced, children, the lonely, the elderly, the isolated. These are the people in our lives who are already trying to fill empty spaces with something, anything, that answers back. I have been in more than one of these categories in my life looking for someone to show me kindness.

However, real care looks different, and unfortunately, it often feels time consuming and maybe inconvenient. When you know the anniversaries and birthdays are coming up for someone who has lost a spouse, we shouldn’t just text a heart emoji. We stop by. We spend time. We invite them to dinner so we can celebrate that person. We let them tell the stories that still need telling. We make room at our tables for the grief that still exists, because grief does not obey timelines.

The elderly neighbor down the street should not be someone we wave to from the driveway while we sprint back inside to our streaming services. They should be integrated into our everyday lives. Pick them up for church. Ask them to join us when you volunteer somewhere or need to run to Walmart. Weed their flower beds alongside them when you see them outside. Bring them into the orbit of normal human rhythm so the internet is not the only place they can find interaction.

Children need more than internet literacy lessons. It is not enough to teach kids to recognize the difference between attention and care if we are not actually caring for them. We have to be involved in their lives. We have to be part of their activities. We have to cheer at their games. We have to invite them to walk alongside us in our everyday tasks, like fixing dinner or washing the car. Kids do not just need warnings. They need relationships, especially our angsty teenagers who give you the side eye and are not always agreeable or loveable. They need a community that shows up in the flesh, not only in their SnapChat streaks and Instagram feeds.

So much of what we have done in this world is replace the empty holes in our lives with technology. It is an imitation at best. It is predatory at worst. These platforms can manufacture attention with frightening efficiency, and artificial intelligence can simulate conversation just well enough to hook someone who is already hungry for connection. When communities stop showing up for one another, that is when they leave themselves vulnerable.

The internet will keep filling with voices that sound human. Some of those voices will be scammers. Some will be bots. Some will be AI systems wearing a human mask. The best defense may not be perfect detection software or brilliant legislation, even though those things can help. The best defense may be the slow, stubborn decision to live like people still belong to each other. If widows are surrounded, they do not have to answer strangers who claim to remember. If children are known, they do not have to chase attention that feels like love. If the elderly are included, they do not have to fill their silence with the first voice that shows up in their inbox.

That kind of community cannot be automated. It has to be built.

Such wise insights. Thank you for focusing on the larger implications of these scams- the betrayals of trust & the pain they cause. Hopefully our communities can reach out to include the most vulnerable, and help them discern untrustworthy contacts on the internet.